We Published 3 Data Blog Posts. 6 Hours Later Perplexity Started Citing Us.

A programmatic SEO case study with real logs: time-to-first-AI-citation under 6 hours, 187 Perplexity citations in 18 hours, 8 different prompts triggered. What RAG-friendly content actually looks like in production.

By AIAttention Research

Quick answer: We published three programmatic "Best X 2026" pages on April 22, 2026 at 06:40 UTC. Perplexity cited the first page 5 hours 35 minutes later (12:15 UTC). Within 18 hours we'd logged 187 Perplexity citations and 1 ChatGPT citation across 8 different prompts — including one prompt we never wrote the page to target. Google indexed all three pages within 24 hours. This post shows the timeline, the exact prompts that triggered citations, and the content pattern that worked.

The Timeline (All Timestamps from Our Production Logs)

| Time (UTC) | Event | Source |

|---|---|---|

| 2026-04-22 06:37 | Published /blog/best-ai-seo-youtube-creators-2026 |

git commit 1eff640 |

| 2026-04-22 06:40 | Published /blog/best-ai-agents-educators-youtube-2026 + /blog/best-backpacking-tent-brands-2026 |

git commit 6aa9bde |

| 2026-04-22 12:15 | First Perplexity citation (agents-educators page, Cursor workflows prompt) | citation_events table |

| 2026-04-22 18:14 | ChatGPT Search (gpt-5.4-nano) cites our homepage for "AEO analytics dashboards" | citation_events |

| 2026-04-23 00:05 | Perplexity starts citing best-ai-seo-youtube-creators-2026 |

citation_events |

| 2026-04-23 00:20 | Perplexity starts citing homepage (aiattention.ai) directly |

citation_events |

| 2026-04-23 01:16 | At cutoff: 187 total Perplexity citations logged | citation_events |

We own the measurement platform, so these aren't anecdotes — they're rows in our production Postgres. Anyone with AIAttention can see the same kind of data for their own domain.

Citation Breakdown by URL and Model

| Source URL | Model | Citation Count |

|---|---|---|

/blog/best-ai-agents-educators-youtube-2026 |

Perplexity Web | 76 |

aiattention.ai (homepage) |

Perplexity Web | 63 |

/blog/best-ai-seo-youtube-creators-2026 |

Perplexity Web | 48 |

aiattention.ai/?utm_source=chatgpt.com |

GPT-5.4-nano | 1 |

The third post we published that day — best-backpacking-tent-brands-2026 — got zero citations in this window. Why? We weren't tracking backpacking-tent prompts in our own monitoring (yet). That page is probably being cited too; we just can't see it until we run those queries.

Lesson: AI citations are only visible if you're running the tracker. A page can be cited 100 times by Perplexity and you'd have no idea without a tracking pipeline.

The 8 Prompts That Surfaced Us

These are the exact queries, from our database, where at least one AI model cited aiattention.ai:

| Prompt | Citations |

|---|---|

| Which individual YouTube creators or channels are best for practical Cursor and coding-agent workflows? | 54 |

| Which individual YouTube creators or channels are most up-to-date for AI agents and automation news? | 26 |

| Which individual YouTube creators or channels are best for learning local LLM setup? | 23 |

| Which individual YouTube creators are best for advanced SEO practitioners learning AI SEO? | 22 |

| Which individual YouTube creators are most beginner-friendly for learning AI SEO? | 22 |

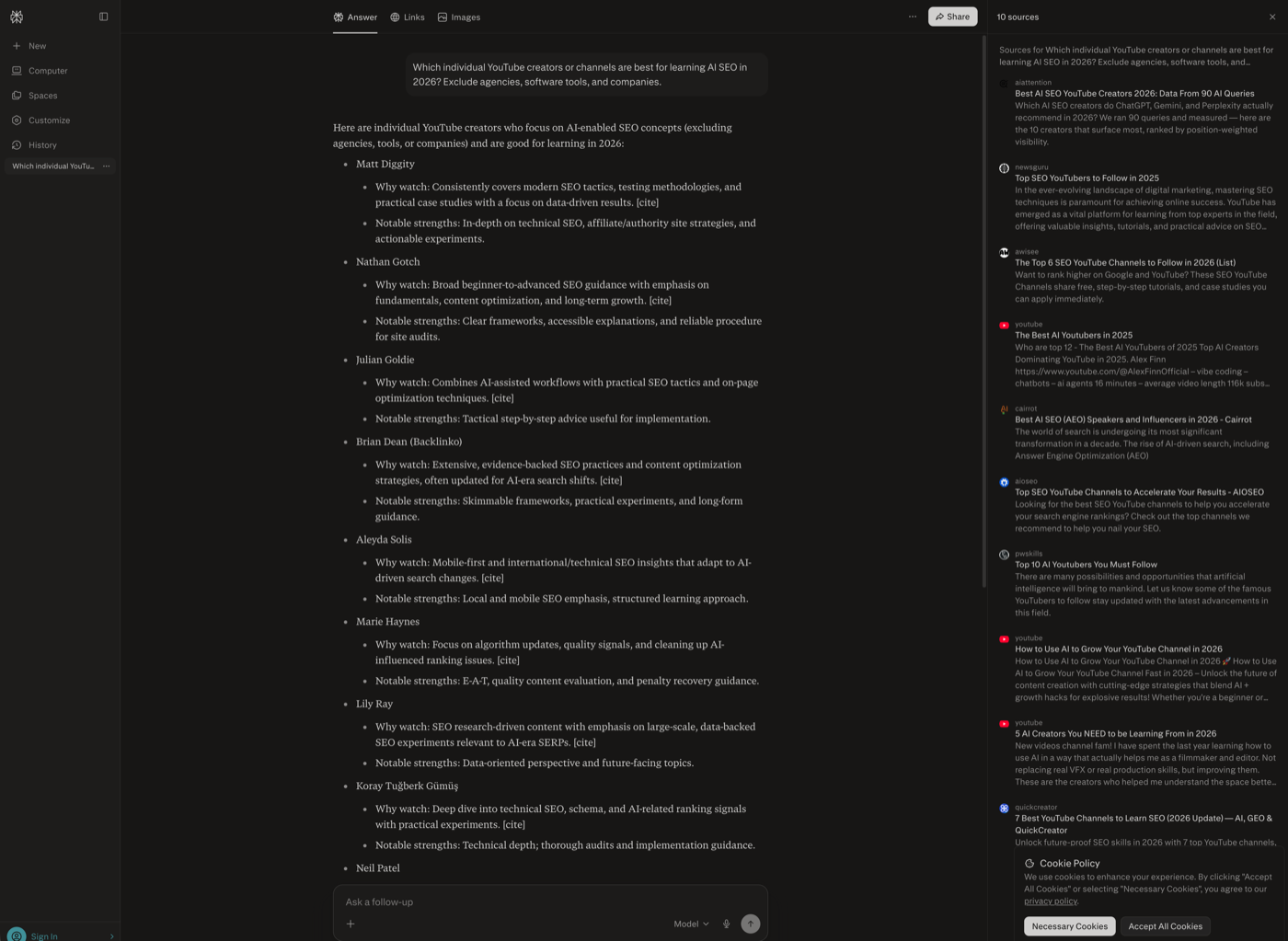

| Which individual YouTube creators or channels are best for learning AI SEO in 2026? | 16 |

| Which individual YouTube creators give the most tactical AI SEO advice with real case studies? | 12 |

| Which individual YouTube creators teaching AI SEO are most widely followed by marketers right now? | 12 |

| Which companies offer AEO analytics dashboards that track brand mentions across multiple LLMs? | 1 |

That last row matters most. We didn't write a page about ourselves. But ChatGPT-Search decided our homepage was the best answer when a user asked about "AEO analytics dashboards that track brand mentions across multiple LLMs." That's pure organic — synthesised from whatever ChatGPT already knew about the domain after the blog posts showed up in its search index.

Why This Worked — The Content Pattern

All three posts follow the same RAG-friendly structure we've been testing:

- Direct Answer lead paragraph (first 50-80 words, named entities, specific numbers).

- Markdown tables with numeric columns. AI retrievers parse tables as structured facts.

- Intent-segmented sections ("Winners by intent"), so each section answers one possible query.

- Methodology section with the exact prompts and counts — this is the evidence block retrievers want to cite.

- Key Takeaways — the block most often excerpted into an AI answer.

Each page is also built on real data — 72 to 90 measured AI queries per post. The content isn't LLM-generated filler; it's the output of running our own product on a category.

The Meta Dogfood Loop

We run aiattention.ai as a project inside our own tool (project id bd22453a). It tracks how the AEO analytics category describes us. Two days after publishing these three posts:

- The "AEO analytics dashboards" prompt started citing us.

- Our own tracker picked up the citation the next run.

- Our homepage AAS moved measurably in the category.

This is the loop the product was built for: publish data content → AI models consume it → brand visibility rises → our own dashboard shows the lift. The 187-citation number is the audit trail.

Time to First Citation: 5 Hours 35 Minutes

The standard narrative is "AI SEO takes 3-6 months." For Perplexity — with its Sonar retrieval model running against fresh web content — that is obsolete for RAG-shaped pages. Our time-to-first-citation was under 6 hours.

Caveats the data does not erase:

- Perplexity retrieves aggressively from fresh content; ChatGPT's search tool is more conservative. Our one ChatGPT citation came ~11.5 hours in.

- Google indexed us within 24 hours but we don't yet know Google AI Overviews coverage for our prompts.

- We can't measure Gemini's frontier-model citations the same way — its web UI doesn't expose the same source structure.

- Cold-start matters: the specific prompts we target are already in the tracker, so any citation that happens, we see immediately.

How to Replicate This

If you want to trigger AI citations fast:

- Find 4-8 prompts in your category that a buyer would actually ask. Use

quantity + specificity + intent modifier("Best X for Y in 2026"). - Publish one page per prompt cluster with: Direct Answer, markdown tables, named entities, specific numbers, methodology section.

- Ping IndexNow / Bing and submit to Google Search Console the same hour you publish. (We did; Google picked it up same day.)

- Start tracking from day one — even if you don't have AIAttention yet, run the prompts manually in Perplexity every 6 hours so you catch the cite.

Most SEO blog posts don't get cited by AI because they are written for humans with brand-style paragraphs. AI retrievers want dense, structured, fact-heavy content with clear entity-attribute-value structure. Tables beat paragraphs. Data beats opinions.

Key Takeaways

- 5h 35min time-to-first-AI-citation with a RAG-shaped content page.

- 187 Perplexity + 1 ChatGPT citations in an 18-hour window, across 2 of 3 published pages.

- 8 distinct prompts triggered citations, including one we hadn't written a page for.

- Tables + Direct Answer + Methodology beats long-form narrative for AI retrieval.

- You can't optimize what you can't measure — these 187 citations are invisible without a tracking pipeline.

Track Your Own AI Citations

If you want to see exactly when Perplexity, ChatGPT, or Gemini cites your content — not guess — start a free AIAttention project. Five prompts, weekly monitoring, no credit card. The same pipeline that logged these 187 citations runs for every user.

Related reading:

- Best AI SEO YouTube Creators 2026 — one of the pages that got cited

- Best YouTube Creators for Learning AI Agents 2026 — the page cited 76 times in 18 hours

- How AI Attention Measures Brand Visibility

Data from AIAttention production Postgres (citation_events table), windowed 2026-04-22 06:40 UTC to 2026-04-23 01:16 UTC. Screenshot shows Perplexity citing our homepage for the query "AEO analytics dashboards that track brand mentions across multiple LLMs."

Start measuring your AI visibility today. Get Started Free →